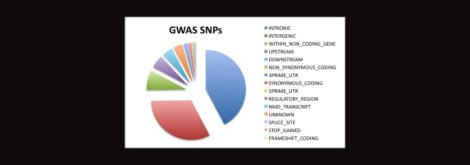

There was a recent article in The New Yorker titled, “Steamrolled by Big Data,” which reminded me of the trend occurring now in the Life Sciences. Even though, unlike Google, we’re not working in such quantities as terabytes or petabytes, the amount of genomic information available has skyrocketed over the last ten years. Even just considering all which has come about from the Genome Wide Association Studies (GWAS) is overwhelming.

And that’s the problem: it’s overwhelming. We’ve figured out ways to very rapidly collect extraordinary amounts of data on living systems, and yet our capacity to fit all these puzzle pieces together is woefully lagging behind. –That’s not to say that since we can’t handle it all we should stop collecting it, but things need to be put into perspective. Unfortunately, we had very grandiose ideas as to how much we would learn from collecting so much genomic and cellular data, ideas which have not panned out. And why is that? Well, Kupiec (2009) suggests that

“… post-genomic biology requires enormous use of bio-computing to integrate the huge quantities of data collected by large-scale transcriptome and proteome analysis. The aim of these programmes is to identify all the RNAs and proteins in a cell in order to establish a map of the interactions they have with each other in the form of networks. It is thus hoped to arrive at a complete description of how a cell functions. However, scientific progress does not result simply from accumulating data. The observations made depend just as much on the theories which guide the research as on the reverse” (p. 2).

The above also applies to the genomic data we’ve collected to date. In spite of all the big data we now have at our fingertips and for all the supercomputers spitting out 1’s and 0’s, science still requires human beings to figure out what it means. But why? As Kupiec would assert, it is because biology is not deterministic– as our current state of genetics theories are still apt to posit– but probalistic. Given that the cell is exceptionally adaptable, interactive, and the boundaries between it and its environment are nominalistic at best, that means that genetic determinism is a woefully simplistic and inadequate concept. In short, it’s just plain wrong.

For awhile there, we had a glimmering hope for a lazy science, one in which we no longer had to think and reason but could simply sit back and wait for a program. With the advent of newer technology, scientists were foolishly hopeful that computers would basically do the work for us as though the calculations were as easy as arithmetic. But most biological data, though informative, is nevertheless ambiguous– tantalizing us with a variety of interpretations. Science requires something more than just calculators. It necessitates– dare I say it?– philosophers. The ways in which we collect data today adhere to the scientific method, but the ways in which we interpret it still hold much in common with modern science’s philosophical predecessor. While methods and technologies have advanced, the human mind still rationalizes the same ways it did during the Renaissance or Ancient Greece. Humans are, after all, human.

Says The New Yorker article:

Some problems do genuinely lend themselves to Big Data solutions. The industry has made a huge difference in speech recognition, for example, and is also essential in many of the things that Google and Amazon do; the Higgs Boson wouldn’t have been discovered without it. Big Data can be especially helpful in systems that are consistent over time, with straightforward and well-characterized properties, little unpredictable variation, and relatively little underlying complexity.

But alas, evolution has thrived on complexity and even the workings of a single cell are still out of our grasp. And the very state of our science reflects that complexity. Just take a wander through PubMed or Google Scholar to get a glimpse at how much data we currently have and how much of it is just sitting there doing nothing. Waiting.

–Meanwhile, while you’re there note how poorly synthesized it all is too. Browsing through search engines can be overwhelming, with hundreds if not thousands of publications being served up for a given query. How is one ever supposed to read through it all? I admit, even from within my own field I’m sure I’m familiar with only a small percentage of related and relevant materials, much less that of other fields of research, and undoubtedly it affects my capacity to interpret new data. In science as a whole, there is considerable inefficiency, squandering, duplication of efforts, and piecemeal leadership. Let’s face it, if we were a company we’d have gone bankrupt long ago.

Hi Emily,

Please comment on the all the data that is going to roll out from the brain mapping projects. Seems like it will put the human genome project to shame.

Newport.jpg

JOHN

K. John Morrow, Jr., PhD.

625 Washington Avenue

Newport, KY 41071

513-237-3303

E-mail:

kjohnmorrowjr@insightbb.com

WEBSITE:

http://www.newportbiotech.com

BLOG:

http://www.newportbiotech.com/pages/blog/

Even though I’m in neuroscience and more money is good money; however, I’m pretty uncertain about the Brain Mapping Project. Compared to the HGP, as I understand it it cost $2.7 billion, so comparatively $100 million (or actually $50 million which goes directly to the research) isn’t all that much. But I kinda suspect that if it actually gets funded, the goals laid out sound rather vague. The whole “mapping” thing gives me doubt whether it’s achievable. There’s enough which is dubious about aspects of neuroimaging. But we’ll see. Who knows whether it’ll even get approved. Republicans do tend to hate to give away money, hehe.

Well said!

And, may your blog never go bankrupt!

There was a « Big Data » conference (the second of a self-congratulatory orgiastic series) in –rather « nrear » –Paris, April 3rd and 4th in the semi-detatched-from-reality corporate glass-and-steel campus monstrosity referred to as « La Défense », in a western suburb of reality-as-most-of-us-know-it. Now, I wouldn’t (again) go near this corporate ghetto except under duress. So, I can’t say that I missed the conference—though I didn’t learn of it until the day after it closed. (Proof that I don’t follow the right corporatist press organs.) In any case, this was my first encounter with the phrase « Big Data. » Upon looking up « Big data » at Wikipedia, I spotted an article referenced in the Harvard Business Review entitled, « Good Data Won’t Guarantee Good Decisions »

(by Shvetank Shah, Andrew Horne, and Jaime Capellá). (Note, the following link is to a preview only : http://hbr.org/2012/04/good-data-wont-guarantee-good-decisions/ar/1 )

The article makes a good point—as far as it goes. But does it really go as far as it ought to in the critical analysis of this phenomenon? Isn’t it interesting, for example, that the title refers to « good » data, when, in fact, the matter concerns « big data »? What’s involved may or may not be good data. Whatever its quality, it concerns lots and lots of data, more data than Hannibal could have transported with all of his elephants. To gain an idea of what « Big Data » means and what Big Data is up to, just have a good look at B.D.’s corporate sponsors at the bottom of the Paris conference’s web-page (link : http://www.bigdataparis.com/2013-uk-index.php ). Clearly, « Big Business » « hearts » « Big Data », Big Time.

( 🙂 Specific Disclaimer on conflict of interest: I have no ties or interests with the following: the corporate bussiness park « La Défense », Harvard Business Review or anything or anyone affiliated with Harvard University, or the authors of the article cited.)

( 🙂 Blanket General Disclaimer of bias: This, your commenter, has an abiding favorable bias toward the views expressed in the article above, and, generally, toward its author, the editor of this site, and, toward the author, cited, Jean-Jacques Kupiec, as well as the general tendency of his work and that of his fellow researchers—including the views experssed in numerous of his published texts.)

——————–

Not to the Editor: Emily, you will want to correct a typographical error in the Tags of this article, where you have Jean-Jacques « Koupiec » for « Kupiec ».

Rx: for general symptoms of stess due to confusion from “Modern technological society”

“ScienceOverAcuppa”, pro re nata.

—Dr. Well-be.

Yeah, I’m still trying to develop an opinion on whether “big data” is “good data”. On the one hand, some of my current work is certainly benefiting from said data, e.g., that arising from the human genome project, etc. On the other hand, it’s kinda useless unless you figure out how to appropriately USE it. So it still takes, like I said, philosophical rationale to contemplate how such vast amounts of data can be put to good use. So, in the case exceptions in which SNP data is just downright false (as a recent bioinformatics article has covered: http://genomemedicine.com/content/5/3/28/abstract), overall I might consider the data “neutral but with potential value”. –Or avec pitfalls, depending on the conclusions drawn from it and whether they’re accurate or not.

PS: Thanks for the typo correction! All fixed.

There are so many important elements surrounding the points you raise. Many of them are touched on, by turns, in the work you cited by J-J Kupiec. Particularly important is your observation–and not the first you’ve raised on this point–of this aspect—

” It necessitates– dare I say it?– philosophers. The ways in which we collect data today adhere to the scientific method, but the ways in which we interpret it still hold much in common with modern science’s philosophical predecessor. ”

In his book, The Trouble With Physics”, Lee Smolin devotes a chapter to the importance for science of what he describes as “Seers and Craftspeople” (chapter 18). The “seers” –which I prefer to call “visionaries”, those, like Darwin and Einstein, for example– correspond most closely to the philosophers you refer to our need for. The craftspeople, as the name suggests, are those who are most adept at the manipulation of instruments, of calculations, of the, shall we say, more practical or applied aspects of science work. Kupiec, as I believe his his writing testifies, apparently possesses the rather rarer combination of visionary and craftsman in science. Science needs visionaries and craftspeople and, when possible, those who combine the approaches of both. But Smolin argues that for décades now, in his examples, in the fields of physics, such visionaries have been anything but welcomed or encouraged and, as a result, the field’s progress has suffered, as he sees it, as a consequence of this predominance of craftspeople at the expense of visionaries.

Perhaps another time the occasion will arise to mention another aspect which for me is worth consideration and that is that all of these problems in the theory and practice of science and research can be found again and again in various other areas and disciplines. If in certain respects, genetics has seen the visionary aspects come to be hostage to a certain dominant paradigm–in this case, a strict and strong deterministic view of cell differentiation and embryogenesis, so, too, has the same sort of thing is seen to have happened in theory and practice in physics–which Smolin recounts–and in finance, where the Black-Scholes formula took precedence over everything, including the most basic realist behavior of actors in a market environment with, again, very harmful consequences.

What is happening has its sources in places which surpass just the realms of physics, genetics or even the natural sciences as a whole. We live in a highly rationalized world where paradigmatic views are now systematically produced and allow little if any room for non-conformists, for visionaries, who, though they may have good ideas about alternatives to the dominant paradigm, have no purchase in the system which is now firmly rooted and resistant to criticism. And all of this comes, I believe, under the very important heading of “philosophy.”

As a footnote to your observation of our “highly rationalized world,” as I’ve mentioned in another comments section on SoaC, we seem to have lost a sense of intellectual romanticism. I really wish that, while we maintain certain beneficial aspects of the rational keep-your-feet-on-the-ground approach to science, we could also rekindle the the excitement scientists once felt for their studies. Granted, issues over money, publication, and the like certainly put a dampener on creativity… but they can’t kill it completely.

Maybe we need to stop taking ourselves so seriously? It seems like science has become a “job” or a “career” and not so much a “need to discover”.

I think it’s a good point; I have to take your word for it, though, as I know or have known personally so very, very few scientists and researchers. Perhaps too many take certain things too seriously. Some might argue that this too often applies to me, too. When one cares very much about something, the temptation to take it too seriously is perhaps often hard to resist–who, after all is on the look-out for this danger?

The worst of coporate management theory and practice is (and for some time has been) moving into the research laboratory and into the lives of more and more scientists. In France, Vincent de Gaulejac has been studying the rise of “management sciences” as a field since the 1970s. In a recent book, he treats the realm of science research specifically in a short conference address published as “La recherche malade du management” (October, 2012) ( as with “Sea-sickness,” or “Home-sickness”) “Management-sickness in Research”

One may stop here.

————————————-

Or, for an somewhat related anecdotal example, read on:

When I think of the kinds of things about modern life which are products of an over-worked rationalized system of authority and control, it’s sometimes difficult to relate the point in a vivid way. But, on occasion, life comes to the rescue as it did a while ago and offers a neat, clear example.

When I arrived at the library a little while ago, with a coffee and something to eat before going in, I settled on the steps of an exterior staircase. One or two others were already seated on the same steps—and, it’s true, I understood that the stairs served as an evacuation route in case of an emergency. Within a minute of my sitting down, a guy came over from the building-and-grounds security and asked that we all clear off the stairs because they’re for emergency evacuation. So, of course we did– the three of us. A minute or two later, the same fellow and one other returned in order to bar the stairs’ passage at the ground level by tying across the way–not just once but in three rungs!–the sort of tape which we commonly see marked “Danger — Do Not Cross”. So, now, instead of three people seated on the stairs, there are three spans of plastic tape (tied in knots at each railing side) across the stairs at the bottom, tied to the railings at the right and left. Thus, before being able to exit in an emergency, now the people evacuating the building have to remove these three spans of tape before they can leave the stairs. I watched this absurd comedy from a few feet away as these guys, so concerned with security, tied off the stairs’ opening–preventing others from doing what I and the other two had done in seating ourselves on the stairs.

When they finished this task on one stair, they went to the opposite one to do the same. I asked, “Doesn’t it strike you as paradoxical that after ordering us off the stairs, you then come and bar them with this tape? If I aksed the chief fire marshall for an opinion, I bet I’d find that this tape across the stairs is itself an infringement of fire safety codes.” ( In other words, which requires more time and difficulty to effect: three seated people getting up and descending a half-dozen steps, or, removing the three spans of tape across the stairs’ exit?) In reply, the one of the security guys says to me, as though it’s a definitive response: “Hey, I’ve got fifteen years experience with the city fire department behind me.”

In short, we can find countless examples of rules applied mindlessly, without regard for common-sense applied to the rule’s original point and purpose. Thus, such idiotic scenes as that which I’ve describe here: security people “enforcing” a view of the rules in a way which ingores actual security in real-life’s terms.

Some suggestions for further reading:

“Bad Management Theories Are Destroying Good Management Practices” by Sumantra Ghoshal; Advanced Institute of Management Research (AIM), UK and London Business School

“Why Do Bad Management Theories Persist? A Comment on Ghoshal” by Jeffrey Pfeffer, ACAD MANAG LEARN EDU March 1, 2005 4:1 96-100;

“The Ph.D. Octopus”, (The Harvard Monthly Magazine, March, 1903) by William James

(complete essay available on-line; E.g. or at The Project Gutenberg eBook, in “Memories and Studies”, by William James)

Maying by “serious” I mean “boring” LOL. But that thing about putting up tape to block off the stairway: how asinine!